Composing Music Using Common Note Patterns and How AI Can Help

One of the goals of the Skiptune project is to use the database to explore how a new tune might be created based on the note patterns of previous composers. This somewhat mechanical approach to musical composition is not a new idea. A number of composers invented games that used randomness, such as dice, to create new melodies. The idea was to roll the dice (or die) to randomly choose a measure of notes that was linked to whatever number was rolled by the dice. In the more complicated games, the dice would, for instance, determine the number of measures, the measure being created, and the notes of the measure.

The oldest surviving musical-dice game was created by Johann Philipp Kirnberger in the middle of the 18th century. He describes his invention in “Der allezeit fertige Menuetten- und Polonosien-komponest” (“The always-available composer of minuets and polonaises”), published in 1757. Kirnberger had written out 96 measures each containing a different set of notes. The player would roll a die and, depending on the number rolled from one to six, would select the first measure of the composition. Because the roll of the die is uniformly random, each of the first six pre-composed measures is equally likely to be chosen. The player repeats this process 16 times (16 x 6 = 96, the total number of pre-composed measures). Each measure is written in 3/4, or waltz, time and the result is a 16-measure minuet. Because there are six possible pre-composed measures for each of 16 measures, there are a total of 2,821,109,907,456, or almost three trillion minuets.

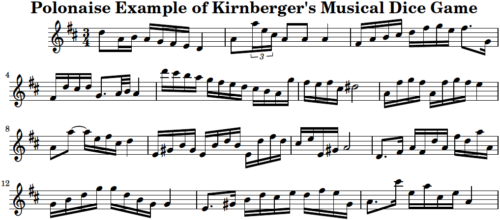

To the left is a polonaise that was composed using Kirnberger’s musical dice game. Only the melody is shown here, but the dice game is capable of producing harmony as well. Kirnberger’s game follows a pre-determined chordal (harmonic) structure, so the same set of chords would fit any melody produced by the game.

Press the “play” button to listen to this melody and decide how it sounds to you. To us, the melody is not without its pleasing aspects. For instance, the tune starts building something interesting in measures 11 through 13, but then doesn’t follow through and the ending is rather abrupt. If we heard this tune and then were told that it was a random composition, we would not be surprised.

Can We Make Manufactured Music Sound Less Artificial?

The above example of a game-produced tune sounds un-humanlike, but that doesn’t mean it isn’t possible to construct a system of music creation that produces more natural sounding melodies. Many have tried, especially in the era of computers. Indeed, there are entire organizations devoted to improving the nature of computer-generated music. Historically speaking, some examples are the Society for Electro Acoustic Music in the U.S. (SEAMUS), The International Computer Music Association (IRCAM), the Canadian Electroacoustic Community (CEC), and Grame in Lyon, France. In addition, many universities have people working on computer-generated music, such as Columbia University (New York City), Goldsmiths College (UK), Griffith University (Brisbane, Australia), the Institute of Sonology (Netherlands), the Institute of the National Research Council (Pisa, Italy), Julliard (New York City), the University of Nebraska (Omaha, USA), and the University of Sussex (UK).

Role of Artificial Intelligence (AI)

Machine learning, and AI like Large Language Models (LLMs), more specifically, have transformed how we think about artificial intelligence. A simple description is that the AI uses statistics to guess the most likely next word given the previous word or set of words. While that approach is not new, having been attempted by some of the aforementioned institutes and universities, a recent innovation in AI allows language models to focus the next predicted word on the context previously generated. The technical term is “transformers with attention,” but that’s just a shorthand way of saying the artificial neural network pays more attention to nearby nodes and discounts the input from those further away. Doing so has allowed ChatGPT and other such models to respond to our requests in an amazingly human fashion.

Large language models cannot be directly applied to music as the basic unit being predicted is different. For large language models, the basic unit is the word. For music, the basic unit can be a note, but it can also be the tuple that we have generated in Skiptune. Recall that each tuple contains the difference in pitch from one note to the next, and the second note’s duration divided by the first note’s duration.

Large language models could have been developed using the English alphabet for just 26 basic units. However, the AI would then have to learn words by itself, a daunting task. So the researcher made it easier on the AI by using English words. Drawing that analogy to music, we could use the 12 notes of the traditional scale as our basic units. But that would mean the AI would have to learn a vocabulary of “words” consisting of one note following another note. By using tuples, we make it easier for the AI to learn our melodies.

AI requires a lot of data, which is why we haven’t applied the “transformers with attention” technology yet to the Skiptune database. One never knows for sure if one has enough data, but we need enough tunes to divide them into a test set, a training set, and a validation set, none of which can contain data from the others. We have, as of January 2026, frozen the Skiptune database at 82,000 unique melodies in order to assess whether we have enough musical data to train an AI model to generate new melodies.